Week 1–2: Hardware & Baseline Inference

Craig Nielsen

March 2, 2026

Lets take some notes for the hardware

table of contents

Raspberry Pi 5 vs Jetson Nano vs Jetson Orin Nano Super

Quick Verdict

Jetson Orin Nano Super Developer Kit is the clear winner for AI vision + quantization — nearly 2× the performance of the standard Orin Nano at the same price point.

Full Comparison

| Feature | Raspberry Pi 5 | Jetson Nano (2019) | Jetson Orin Nano Super |

|---|---|---|---|

| Price | ~€70–80 | ~€100–150 | ~€250 (devkit) |

| CPU | Cortex-A76 × 4 | Cortex-A57 × 4 | Cortex-A78AE × 6 |

| GPU | VideoCore VII | 128-core Maxwell | 1024-core Ampere |

| AI Performance | ~0.1 TOPS | ~0.5 TOPS | 67 TOPS |

| CUDA Generation | ❌ | Maxwell (old) | Ampere (modern) |

| TensorRT | ❌ | TRT 8 (limited) | TRT 10 (full) |

| INT8 Quantization | via TFLite only | Partial | ✅ Full |

| FP16 / BF16 | ❌ | FP16 only | ✅ Both |

| RAM | 4–8GB LPDDR5 | 4GB LPDDR4 | 8GB LPDDR5 |

| JetPack Version | N/A | 4.x (EOL soon) | 6.x (current) |

| PyTorch support | ✅ CPU only | Aging builds | ✅ Modern builds |

| Power | 5–7W | 10W | 7–25W |

| Camera / CSI | ✅ | ✅ | ✅ 2× MIPI CSI |

| Storage | microSD | microSD | microSD + NVMe M.2 |

| GPIO | 40-pin | 40-pin | 40-pin |

Why Orin Nano Super Dominates for Your Use Case

1. 67 TOPS — Not 40, Not 0.5

The "Super" revision nearly doubles the standard Orin Nano's AI compute. For context:

- 134× more AI performance than the old Jetson Nano

- 670× more than Raspberry Pi 5

- Real-time multi-stream vision inference becomes trivial

2. Ampere GPU = Modern Quantization Stack

- Full INT8 + FP16 + sparse inference support

- TensorRT 10 with latest optimizations

- Directly mirrors the workflow on A100/H100 in the cloud — your skills transfer cleanly

3. Developer Kit = Ready Out of the Box

- Carrier board, power supply, ports, and camera connectors all included

- Flash JetPack via NVIDIA SDK Manager and you're running

- All NVIDIA tutorials, DeepStream examples, and community answers target this exact hardware

4. Future-Proof Ecosystem

- JetPack 6 = Ubuntu 22.04, CUDA 12, cuDNN 9

- Same product family as Orin NX, AGX Orin — quantized TensorRT models scale up seamlessly

- Old Jetson Nano is stuck on Ubuntu 18.04 / CUDA 10 — a dead end

Model Performance Estimates (Real-time = 30 FPS)

| Model | RPi 5 | Jetson Nano | Orin Nano (40T) | Orin Nano Super (67T) |

|---|---|---|---|---|

| YOLOv8n (INT8) | ~8 FPS | ~15 FPS | ~120 FPS | ~200+ FPS |

| YOLOv8s (FP16) | ~3 FPS | ~8 FPS | ~60 FPS | ~100+ FPS |

| MobileNetV3 | ~20 FPS | ~35 FPS | ~200 FPS | ~330+ FPS |

| ResNet-50 (INT8) | ~5 FPS | ~12 FPS | ~100 FPS | ~165+ FPS |

Final Verdict on Devices

| Option | Verdict |

|---|---|

| RPi 5 | Fine for non-AI tasks; underpowered for vision |

| Jetson Nano (old) | Avoid — aging ecosystem, dead end |

| Jetson Orin Nano 8GB | Good, but superseded |

| Jetson Orin Nano Super ✅ | Best-in-class edge AI for prototyping — modern stack, 67 TOPS, devkit ready |

If you're serious about prototyping and deploying quantized vision models, the Jetson Orin Nano Super Developer Kit is the definitive choice. The ~€100 premium over a bare Orin Nano module gets you everything you need to start immediately, with headroom to push serious models.

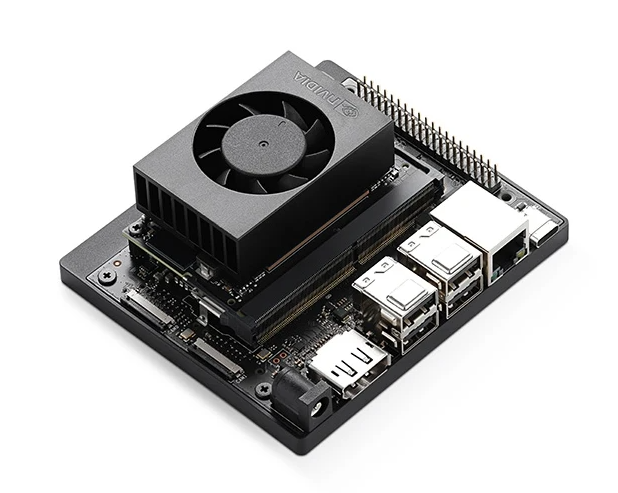

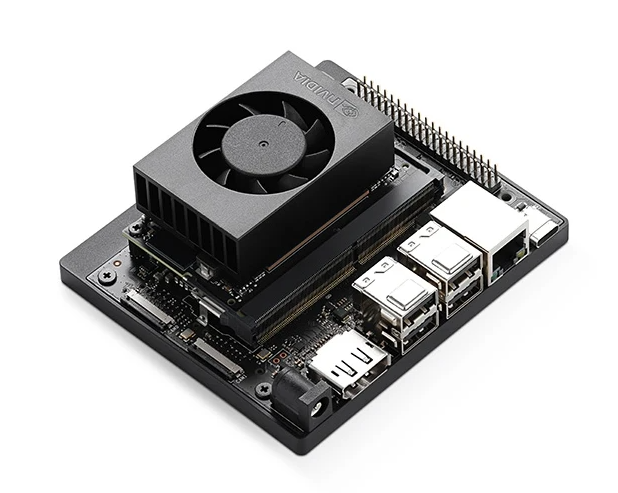

Why get the "Jetson Orin Nano Super" dev kit?

What is a Carrier Board?

Think of it like a motherboard for the Jetson module. The Jetson SOM (System on Module) is essentially just the brain — CPU, GPU, RAM — packed onto a small board with no ports or connectors of its own. It's useless alone. The carrier board gives it everything needed to actually function.

This is exactly why the Developer Kit is the right buy for prototyping — it includes the carrier board, power supply, cooling, and all connectors bundled. The module alone is just a chip with nowhere to go.

The Parts

SOM (System on Module)

The Jetson chip itself. A small PCB with the processor, GPU, RAM, and storage controller. Plugs into the carrier board via a high-density connector. This is what you're paying for performance-wise — 67 TOPS lives here.

Carrier Board

The base board the SOM sits on. Breaks out all the SOM's signals into usable ports and connectors. Take A Look

| Port / Feature | What It's For |

|---|---|

| USB-A ports | Keyboard, mouse, peripherals |

| USB-C | Power or data |

| Gigabit Ethernet | Network, SSH access |

| M.2 NVMe slot | 2x Fast SSD storage |

| microSD slot | Boot drive or extra storage |

| 40-pin GPIO header | Sensors, LEDs, I2C/SPI devices |

| 2× MIPI CSI connectors | Direct camera module input |

| Fan header | Active cooling control |

| DC barrel jack | Power input |

MIPI CSI Camera Connectors

Flat ribbon-cable connectors for dedicated camera modules (e.g. IMX219, IMX477). Much higher framerates and lower latency than USB webcams — essential for real-time vision pipelines.

40-pin GPIO Header

Same form factor as a Raspberry Pi header. Communicate with external hardware over I2C, SPI, UART, or digital I/O. Useful for triggering hardware or reading sensors alongside your vision pipeline.

M.2 NVMe Slot

Slot for a fast SSD. Important for storing large model weights, fast dataset loading during development, and a much snappier OS than running off microSD.

Cooling (Fan + Heatsink)

At 67 TOPS the Orin Nano Super runs hot under sustained inference load. The included active cooler keeps it from throttling during long training or inference sessions.

Mental Model

[ Jetson SOM ] ← the brain ↕ plugs into [ Carrier Board ] ← the body ↕ connects to [ Cameras / Storage / Network / Peripherals ]

In production you'd swap the devkit carrier for a custom or third-party board designed for your product's form factor — smaller, ruggedized, only the ports you need. For prototyping, the devkit carrier gives you everything in one.